Ditch the proverbial laundry list and build impactful reports with evidence of change that leaders, partners and the public can trust and act on.

1. Impact reporting is now a credibility test, because stakeholders expect organisations to explain outcomes clearly and meaningfully—grounded in real evidence rather than polished activity lists. 2. The SHARE framework prevents overclaiming by keeping reporting prioritised, contextual, measured, data-responsible and genuinely informative for the reader. 3. Impact reporting works best as a repeatable habit—built on clear intent, empathy for the reader, calibrated claims and a coherent narrative—rather than a last-minute, reactive effort to satisfy stakeholder demands. |

During a board meeting, a member asks a familiar question: “So, what changed?” The team has slides on programmes launched, partnerships signed, papers published, people trained, communities reached. Numbers and figures abound, but the question remains up in the air. Activities are not the same as outcomes and a discerning audience can swiftly tell fact from fluff.

That gap is where impact communications sits. For years, many organisations treated it as a discretionary exercise—something churned out hurriedly, even haphazardly, when donors and grantors demanded it, when regulators insisted, or when the public asked how funding was used and what it achieved.

That posture is out of line with today’s expectations. Stakeholders are constantly bombarded by claims and polished narratives, and generative AI has made generic—and hyperbolic—content highly accessible. In response, readers increasingly seek out evidence of impact. They want a coherent account of what an organisation set out to change, what it did and what actually moved the needle. On top of that, limits and trade-offs matter as much as achievements.

It is through impact communications that organisations meet these expectations. Explaining outcomes with clarity, it connects intent with action and action with consequence, providing the foundation upon which credible impact reporting is built.

FROM VISIBILITY TO EXPLANATION, NOT PROMOTION

Impact communications shifts the focus from visibility—a cornerstone of general, corporate communications—to explanation, concentrating on making outcomes intelligible. It is a structured way of answering a small set of questions that stakeholders care about, even when they do not phrase them explicitly:

- What did you set out to change?

- What did you do, and why that approach?

- What changed, for whom, and over what timeframe?

- What did you learn, and what will you do next?

Such reporting links actions to tangible social, environmental or economic outcomes. Crucially, it makes the organisation’s logic visible. A strong impact report guides the reader through key messages early, then provides detail for those who want to go deeper.

This definition matters because “impact reporting” is often mistaken for adjacent practices, the main one being ESG disclosures. While it is essential and increasingly mandatory, ESG reporting focuses on risks, governance and performance across environmental, social and governance domains. Impact reporting answers a different question: what outcomes did your work produce beyond your balance sheet and operational metrics?

Impact reporting is also not about listing corporate social responsibility initiatives, which may fail to clarify what changed as a result. It should not be confused with marketing communications, nor is it a substitute. Storytelling has a role, but impact reporting is not a promotional genre. Narratives instead provide the stage for impact, grounding in context an organisation’s actions and outcomes. An impact report’s credibility depends on restraint, with a careful balance of language and claims, and a transparent handling of data and limitations.

Moreover, impact reporting differs from the annual report. Annual reports provide a broad snapshot of financial performance and operational health. Impact reports are more targeted: they focus on outcomes and broader consequences, often using case studies and qualitative evidence alongside metrics.

NO LONGER A “NICE-TO-HAVE”

Many will ask a reasonable question: What problem does impact reporting solve that existing communications do not?

While it is by no means a replacement for general, corporate or marketing communications, all of which serve important roles within an organisation, impact reporting alleviates three pressures that have intensified across sectors.

Firstly, building trust increasingly depends on proof standards. Stakeholders are sceptical of broad claims because they have seen too many of them. They look for specificity, consistent logic and evidence that is presented with honesty. Impact reports are often the first place where an organisation’s credibility is tested, because the content sits directly at the intersection of purpose and proof.

In addition, a clear organisational strategy is upheld by explicit outcomes. Impact reporting is often framed as outward-facing communication, but it also functions as a strategic tool internally. What organisational leaders choose to measure and communicate shapes what people treat as success. When impact is opaque, organisations tend to optimise for outputs (projects completed, beneficiaries reached, number of engagements). A well-crafted impact report takes it a step further, helping teams see progress as something that unfolds over time rather than a singular change. It keeps people grounded in what has moved, what has not—and why—thus strengthening alignment, morale and a sense of purpose.

Lastly, in an era defined by crowded information environments, increasingly saturated with “AI slop”, clarity stands out, and it can influence funding, partnerships and decision-making. Organisations that can explain and defend outcomes with clarity become easier to work with. That matters for funders deciding between proposals, for partners assessing whether collaboration will pay off, for regulators evaluating credibility and for talent determining whether purpose is real or performative.

SHARE: THE QUALITY BAR FOR IMPACT REPORTS

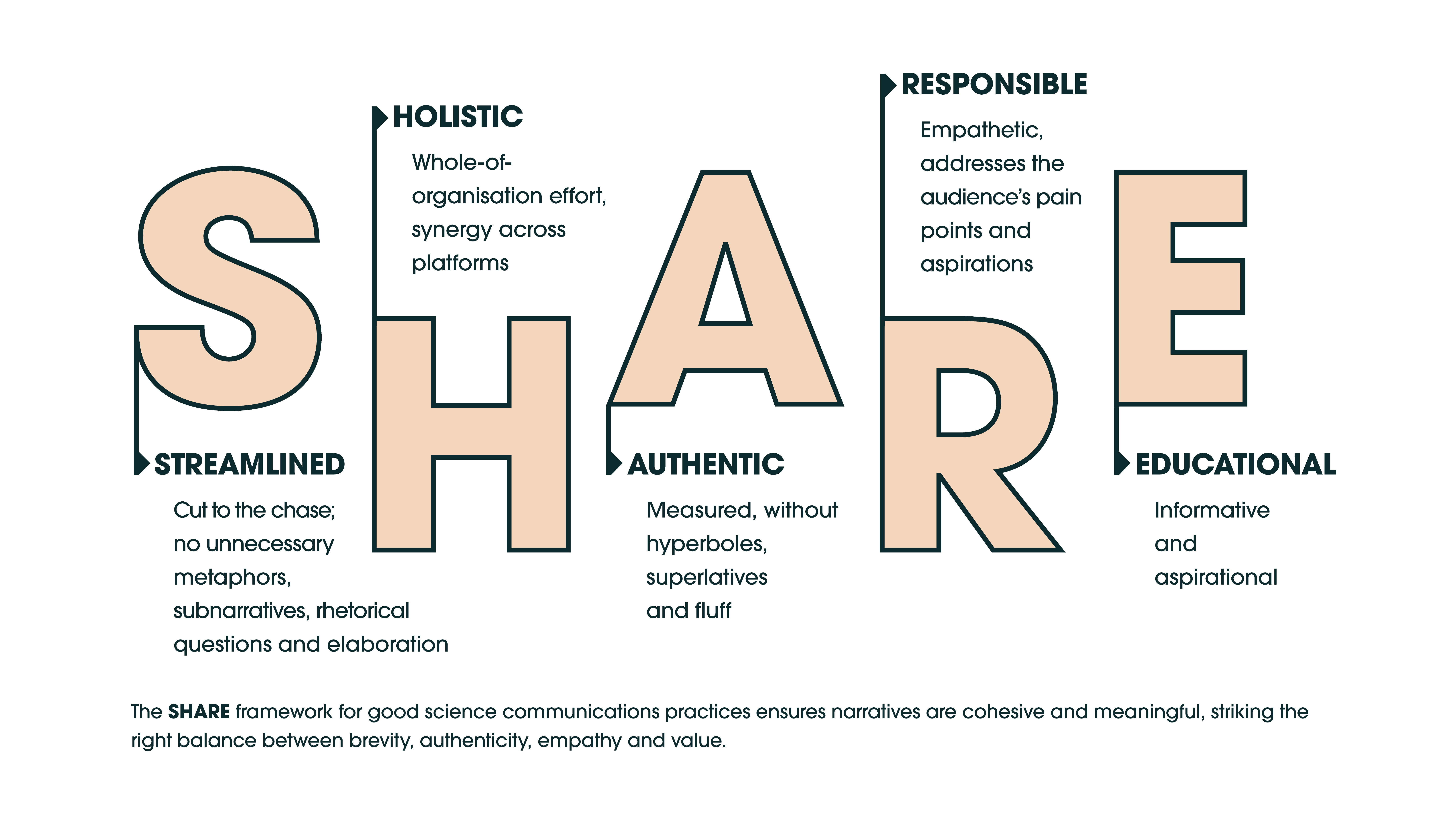

Many organisations do not struggle because they lack effort. They struggle because their reports sprawl, their claims outrun their evidence or their documents read like collections of unrelated achievements. The “SHARE” framework, developed by impact communications consultancy Ernest & Kin, is a useful quality bar because it addresses the most common failure modes directly.

Streamlined: Cut to the chase. Remove unnecessary sub-narratives, rhetorical questions, metaphors and elaboration.

A streamlined impact report does not try to cover everything. It prioritises what matters most, puts key messages early and guides the reader logically. It resists the organisational instinct to include every project to avoid internal disappointment.

Editorial test: If the report were half its length, what would remain? The answer is close to your real impact story.

Holistic: Treat reporting as a whole-of-organisation effort and show the ecosystem that makes impact possible.

Impact is rarely produced by one team alone. It is shaped by partners, policies, infrastructures, communities, and sometimes, competing forces. Holistic reporting shows how these elements interact. It also avoids the credibility trap of claiming sole credit for outcomes that were co-created.

Editorial test: Does the report acknowledge where impact depends on others, and does it make collaboration visible?

Authentic: Measured language, no hyperbole, no superlatives, no fluff.

Authenticity builds trust. Strong impact reports include challenges, uncertainties and areas still in progress. They do not avoid uncomfortable truths. They do not present setbacks as “opportunities” in glossy language. They treat the reader as capable of nuance.

Editorial test: Would a sceptical stakeholder say this sounds honest, or sounds curated?

Responsible: Empathetic and attentive to the audience’s priorities, pain points and aspirations.

Responsible reporting is partly about evidence and ethics, and partly about respect. It anticipates what the audience needs in order to interpret claims correctly. It also handles data integrity seriously with clear methodologies, ethical sourcing, privacy protection and careful framing of sensitive information, especially in healthcare and human-centred work.

Editorial test: Does the report make it easy for readers to interpret the data without misreading it?

Educational: Informative and aspirational to leave the reader smarter about the world and the issue.

Impact reports often over-focus on the organisation and under-explain the problem. Educational reporting provides context. It shows what is at stake, why the work matters and what might happen if nothing changes. This is where impact reporting becomes more than accountability; it becomes public value.

Editorial test: After reading, does the audience understand the issue better, or only the organisation better?

A useful way to present SHARE is to pair each principle with the failure mode it prevents:

- Streamlined prevents the laundry list.

- Holistic prevents isolated storytelling that ignores systems and partners.

- Authentic prevents inflated claims and credibility loss.

- Responsible prevents misinterpretation and ethical shortcuts.

- Educational prevents reports that read like internal newsletters.

A SIX-STEP PROCESS THAT LEADERS CAN USE

The most common reason impact reporting fails is not lack of intent. It is a process problem. Teams scramble late, assemble whatever data is available, then write backwards from that pile. This “last-minute compilation” should be averted and an approach akin to writing a graduate thesis should be adopted—with continuous documentation, revision and records that create coherence. The following six steps are designed to be applicable across sectors.

Step 1: Clarify intent, audience and the decision you want to shape

Writing for stakeholders as a generic group guarantees vagueness. Be specific:

- Who is the primary audience: board, funders, partners, staff, policymakers, communities, the public, or a combination of two or more?

- What decision or behaviour should the report influence: funding confidence, partnership readiness, recruitment, policy support, internal alignment?

- What is the appropriate format: standalone report, integrated report section, thematic publication?

Deliverable: a one-page intent brief with audience, purpose and scope.

Step 2: Map what matters most

Focus is the hardest part, because it requires exclusion. Identify the outcomes that matter most to your mission and to stakeholders, and engage stakeholders where possible, recognising relevance and the value of dialogue in shaping messaging. Use practical constraints to force prioritisation. Two useful prompts:

- If you had only ten pages, what outcomes would you keep?

- Which three to five themes best represent what you are genuinely trying to change?

Deliverable: a shortlist of material impact themes, with a rationale for why these matter.

Step 3: Build an impact inventory

This is where seriousness becomes tangible. Gather evidence across three layers:

- Outputs: what you delivered (services, programmes, innovations, publications, deployments).

- Outcomes: what changed for people, systems or environments over a defined timeframe.

- Limits and unintended effects: where targets were missed, trade-offs emerged or consequences were mixed.

Strengthen the inventory using these strategies:

- Contextualise metrics against targets over time, and against benchmarks where useful.

- Include perspectives from partners and those affected, not just internal voices.

- Consider independent review where appropriate to strengthen accountability.

- Use careful data practices, including privacy and ethical sourcing.

Where possible, look for baseline-and-follow-up patterns, or plausible comparisons that help interpret change. Avoid claiming full causality when the evidence supports contribution; readers accept contribution language when it is explained clearly.

Deliverable: a shortlist of material impact themes, with a rationale for why these matter.

Step 4: Build the narrative spine and guide the reader

A strong impact report is written with the reader in mind. The author must guide the reader rather than forcing them to hunt for key messages. For each theme, use a consistent structure:

- Context or background: what problem you are addressing and why it matters.

- Approach: what you did and why that strategy was chosen.

- Change: what shifted, supported by data and lived experience.

- Learning: what did not work as expected, what you would adjust, what comes next.

It is crucial to use clear language, avoid overstatements and place key findings early, while letting details sit behind summaries for readers who would like a deep dive.

Deliverable: a narrative outline per theme, with evidence embedded and claims calibrated.

Step 5: Design for reach and reuse

Impact reporting should not end as a PDF only. Reports can instead be repurposed into a range of materials that support awareness, recruitment, fundraising and partnerships. The key is to keep the evidence core consistent while tailoring formats for different audiences.

Consider a small set of “derivative products”:

- A short web summary for general readers

- A partner deck focused on outcomes and collaboration opportunities

- An internal version that reinforces staff motivation and focus

- Visual assets that translate data into understandable patterns

Use data visualisation carefully—every chart should have a clear purpose, and captions should provide enough context for the visual to stand alone.

Deliverable: a distribution plan, including audiences, formats and how assets will be reused without fragmenting the message.

Step 6: Maintain momentum

The strongest impact reports are born out of routine efforts, supported by a governance structure:

- Executive sponsor to signal importance and resolve trade-offs

- Cross-functional working group spanning strategy, operations, finance, programme leads and communications

- Clear ownership for data integrity, narrative development and stakeholder engagement

To make impact reporting sustainable, build a realistic cadence. Annual reporting works for some organisations, while others benefit from thematic or biennial impact reports with lighter interim updates. The key is continuity, whereby progress is tracked over time, with comparisons done against prior periods and learning documented. At the end of each reporting cycle, treat the process as a feedback loop:

- What data was difficult to obtain, and why?

- Which outcomes remain poorly measured?

- Where did stakeholder feedback reveal misalignment or blind spots?

- What priorities need refinement as conditions change?

Maintaining this momentum ensures impact reporting becomes more than a publication cycle, but instead a management habit that strengthens decision-making and accountability.

WHAT IT MEANS TO TAKE IMPACT SERIOUSLY

Impact reporting answers a simple but critical question: Does what you do matter? It is important to acknowledge that the question rarely arrives in that exact form. It appears as due diligence from a partner, scrutiny from a policymaker, scepticism from a community or a board’s demand for clarity. It also appears internally, when staff want to know whether their work is producing outcomes that justify the effort.

Leaders can choose to be reactive and let others define the organisation’s impact through fragments, assumptions or external narratives. They can also choose to be proactive and build the capability to explain outcomes with discipline, honesty and evidence. Ultimately, a strong impact report kills two birds with one stone: it serves as an internal tool that clarifies priorities and strengthens resource allocation, while beaming an external signal of credibility that cultivates trust, partnerships and long-term relevance.

In a world awash with noise and superlatives, the organisations that cut through the static will be those that can show—clearly, ethically and without exaggeration—what has changed because they exist.

THE FOUR NON-NEGOTIABLE QUESTIONS Every credible impact case study must be able to answer these clearly and convincingly. 1. Why this problem? What systemic or societal issue does this work address—and why does it matter beyond the discipline? This forces clarity on:

2. Why now? What has changed that makes this work timely, necessary or actionable at this moment? This might include:

Without “why now”, the case lacks urgency and risks sounding retrospective or speculative. 3. Why this approach? What is distinctive about the way the problem is being addressed? This is where you demonstrate:

This is often the weakest part of many cases, yet it is where credibility is earned. 4. Why you (or this team/institution/organisation)? Why is this group uniquely positioned to deliver the impact? This is not about prestige or track record alone. It is about:

In short: what makes success plausible, not aspirational. THE CREDIBILITY QUESTIONS (OFTEN OVERLOOKED) Once the four core questions are addressed, you can usually push cases further with these: 5. What changed—and for whom? Impact is about change, not activity. Be explicit about:

6. How do you know the change occurred? Evidence matters—especially outside academia. This could include:

Anecdotes alone are rarely sufficient. 7. What would not have This is the counterfactual question. It distinguishes:

If the impact would likely have happened anyway, the case needs reframing. 8. Why is this worth investing in or scaling? This is where impact meets strategy. Address:

This is especially important for funders, partners and policymakers. A quick stress test If an impact case study cannot clearly answer: |

Julian Tang

is Founder and Editor-in-Chief of Ernest & Kin, a Singapore-based science and technology communications consultancy. He works with universities, research institutes and public-sector teams across Asia-Pacific to translate research, programmes and partnerships into credible impact narratives—grounded in evidence, strategy and controlled storytelling.

This article is based on a whitepaper, The Art of Impact Reporting, written by Ernest & Kin. For more information, please click the link here.